full_flights = pl.read_csv('./data/On_Time_Reporting_Carrier_On_Time_Performance_(1987_present)_2022_1.csv')

full_flights.shape(537902, 110)To showcase the robust syntax and efficiency of the polars package, we will leverage a moderately-sized dataset, comprising approximately 530,000 rows.

full_flights = pl.read_csv('./data/On_Time_Reporting_Carrier_On_Time_Performance_(1987_present)_2022_1.csv')

full_flights.shape(537902, 110)full_flights.estimated_size('mb')240.02948379516602The way R and Polars handle types reflects a fundamental design difference: R prioritises convenience through implicit coercion, while Polars prioritises correctness through explicit conversion.

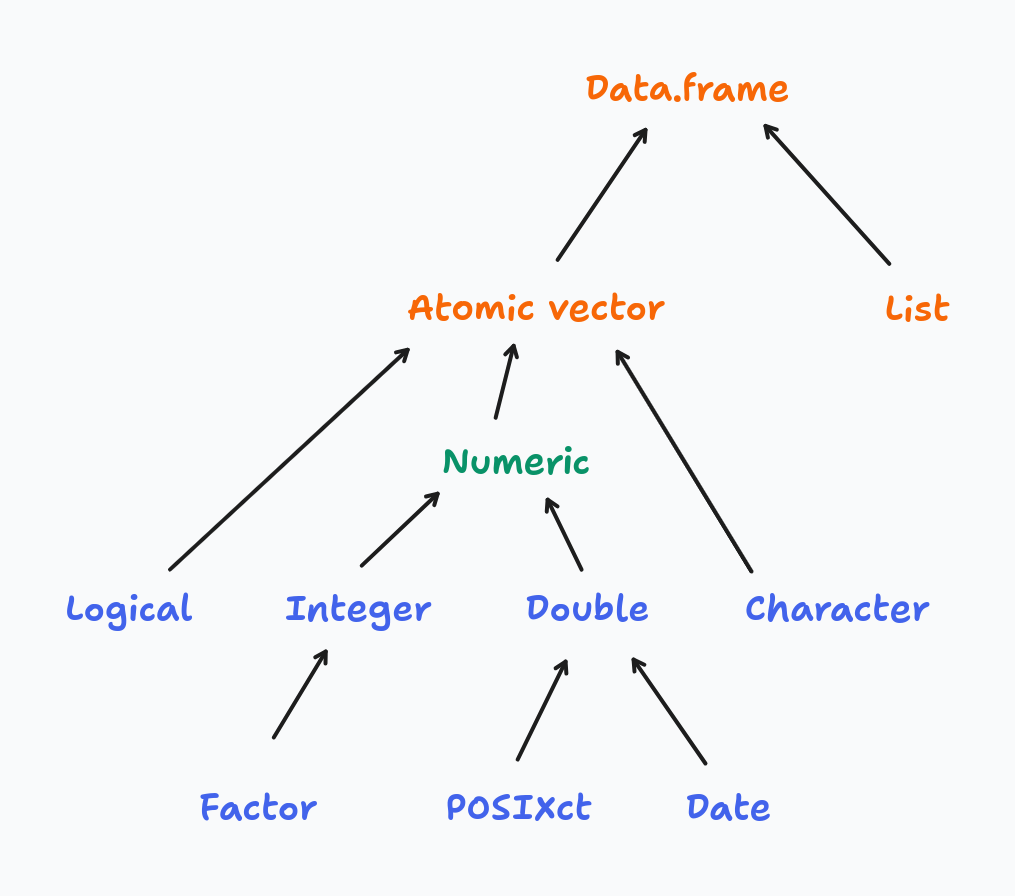

R’s type system is built around four atomic vector types — logical, integer, double, and character — arranged in a coercion hierarchy:

When you combine values of mixed types, R silently promotes them up the hierarchy to find a common type:

# R coerces everything to the "highest" type in the hierarchy

x <- c(TRUE, 1L, 2.5)

typeof(x) # "double" — logical and integer were silently promoted

y <- c(TRUE, 1L, 2.5, "text")

typeof(y) # "character" — everything coerced to stringThis makes interactive exploration forgiving — R rarely stops you with a type error. The cost is that type mismatches surface as subtly wrong results rather than loud errors, which makes bugs hard to trace in larger codebases.

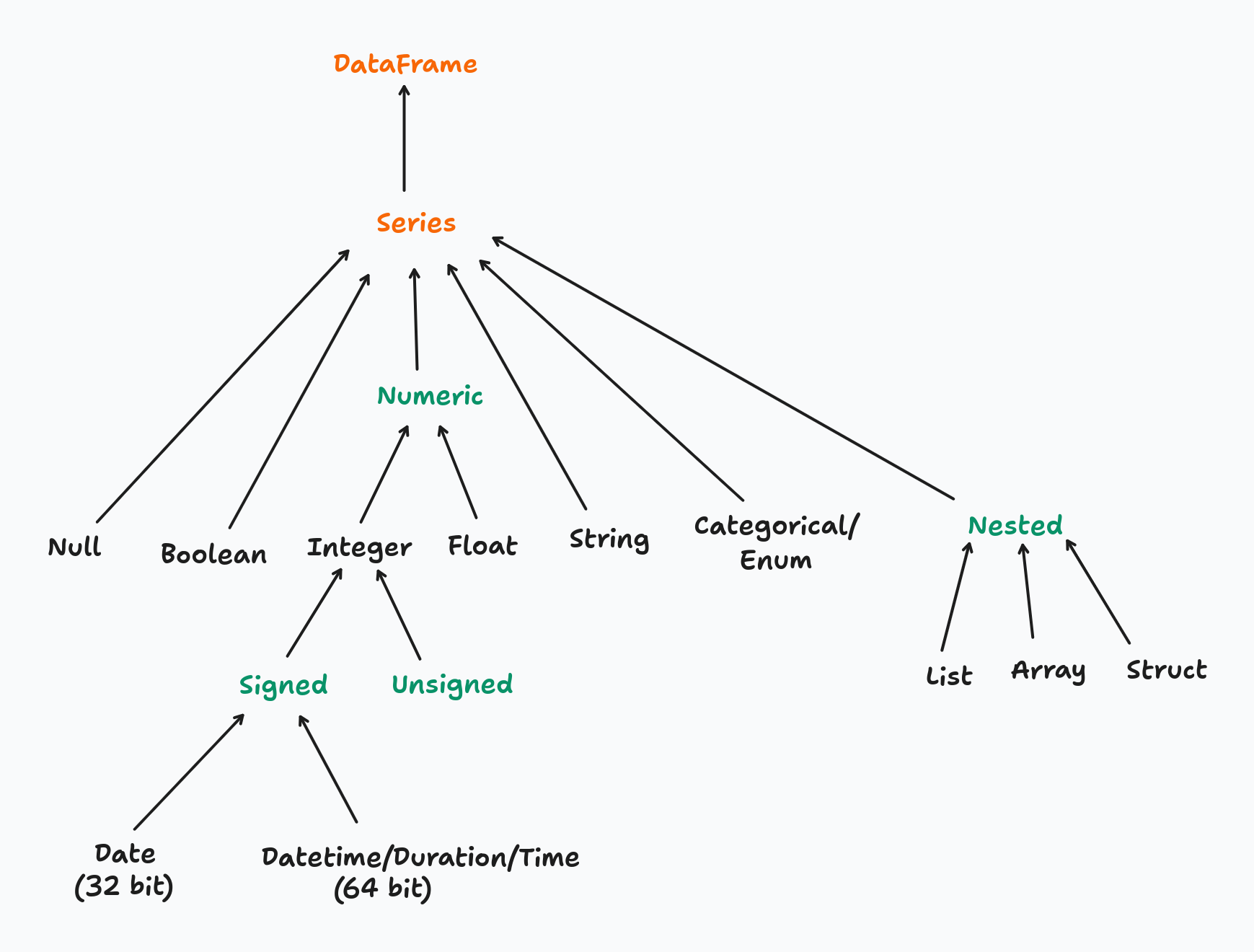

Polars has a much richer type system — granular numeric types (Int8 through Int64, UInt8 through UInt64, Float32, Float64), dedicated temporal types, nested types, and more:

Polars never coerces silently. Every type change must be declared explicitly with .cast(). You can inspect the current type of a column before acting on it:

full_flights['Year'].dtypeInt64To convert the Year column from integer to string:

full_flights = full_flights.with_columns(

pl.col('Year').cast(pl.String)

)

full_flights['Year'].dtypeStringIf .cast() cannot perform the conversion safely (e.g. casting "abc" to Int64), Polars raises an error immediately rather than producing a null or a wrong value — unless you explicitly opt in to lenient casting with strict=False.

The tradeoff is intentional: explicit casting is more verbose, but type contracts are visible in the code and mistakes fail loudly at the point of conversion. For production pipelines processing millions of rows, catching a type mismatch early is far cheaper than diagnosing a silently incorrect result downstream.

In Python, a method is a function that belongs to an object and is invoked using dot notation: object.method(). The object provides both the data and the set of operations available on it.

The distinction from a standalone function comes down to ownership:

# standalone functions — data is passed as an argument

len(full_flights)

pl.read_csv('./data/flights.csv')

# methods — the object is the implicit first argument

full_flights.shape

full_flights.head()

full_flights.filter(pl.col('OriginStateName') == 'Ohio')A method always has implicit access to the object it belongs to. A DataFrame method like .filter() knows it is operating on a DataFrame; a Series method like .mean() knows it is operating on a Series. This means methods are type-specific — .filter() exists on a DataFrame but not on a Series.

R’s tidyverse follows a function-first style: data is passed as the first argument to a verb, and the pipe operator chains those verbs into a pipeline:

# R / dplyr: functions act on data passed as an argument

full_flights |>

filter(OriginStateName == 'Ohio') |>

select(FlightDate, Origin, Dest)Python/Polars follows a method chaining style: the data object comes first, and methods are applied sequentially via the dot operator:

(

full_flights

.filter(pl.col('OriginStateName') == 'Ohio')

.select(['FlightDate', 'Origin', 'Dest'])

)The two patterns are structurally equivalent — both read as a left-to-right pipeline of transformations. The key difference is where the data lives: in R, data flows through functions as an argument; in Python, data is the object and methods are behaviors it carries with it.

One practical consequence of this is that in R, filter() works on any compatible object — data frames, tibbles, grouped tibbles. In Python, each type exposes its own set of methods, so you need to be aware of what type you are working with at each step of the chain.

Filtering rows in Polars maps directly to dplyr’s filter(). The function name is the same; the main syntax difference is that Polars requires column references to be wrapped in pl.col().

# dplyr

flights_from_ohio <- full_flights |> filter(OriginStateName == "Ohio")flights_from_ohio = full_flights.filter(pl.col('OriginStateName') == 'Ohio')

flights_from_ohio.shape(7434, 110)# dplyr — comma-separated predicates are implicitly AND-ed

flights_from_ohio_to_virginia <- full_flights |>

filter(OriginStateName == "Ohio", DestStateName == "Virginia")# note that each predicate must be enclosed within parentheses when using &

flights_from_ohio_to_virginia = full_flights.filter(

(pl.col('OriginStateName') == 'Ohio') & (pl.col('DestStateName') == 'Virginia')

)

# comma-separated predicates are also AND-ed, avoiding the need for parentheses

# flights_from_ohio_to_virginia = full_flights.filter(

# pl.col('OriginStateName') == 'Ohio',

# pl.col('DestStateName') == 'Virginia'

# )

flights_from_ohio_to_virginia.shape(564, 110)# dplyr

flights_from_ohio_except_virginia <- full_flights |>

filter(OriginStateName == "Ohio", DestStateName != "Virginia")flights_from_ohio_except_virginia = full_flights.filter(

pl.col('OriginStateName') == 'Ohio',

~(pl.col('DestStateName') == 'Virginia')

)

flights_from_ohio_except_virginia.shape(6870, 110).is_in(), the equivalent of R’s %in% operator:# dplyr

major_hubs <- full_flights |>

filter(OriginStateName %in% c("California", "Texas", "New York"))major_hubs = full_flights.filter(

pl.col('OriginStateName').is_in(['California', 'Texas', 'New York'])

)

major_hubs.shape(143765, 110).filter() over boolean indexing? The key reason is that .filter() integrates with Polars’ lazy execution engine, which pushes the predicate down to the scan and avoids loading unnecessary rows into memory:import time

start = time.perf_counter()

lazy_result = (

pl.scan_csv('./data/On_Time_Reporting_Carrier_On_Time_Performance_(1987_present)_2022_1.csv')

.filter(pl.col('OriginStateName') == 'Ohio')

.collect()

)

print(f"lazy: {time.perf_counter() - start:.3f}s")

start = time.perf_counter()

eager_result = (

pl.read_csv('./data/On_Time_Reporting_Carrier_On_Time_Performance_(1987_present)_2022_1.csv')

.filter(pl.col('OriginStateName') == 'Ohio')

)

print(f"eager: {time.perf_counter() - start:.3f}s")lazy: 0.520s

eager: 0.486sPolars offers three levels of column selection, progressing from explicit to declarative:

pl.col(dtype) selects all columns of a given typepolars.selectors) — a high-level API for flexible, composable selection based on types, name patterns, or propertiesSelectors are the recommended approach for anything beyond a simple name list. They produce code that reads closer to intent, composes naturally with set operations, and adapts automatically when the schema changes.

# dplyr

full_flights |> select(FlightDate, Tail_Number)full_flights.select(['FlightDate', 'Tail_Number']).head(3)| FlightDate | Tail_Number |

|---|---|

| str | str |

| "2022-01-14" | "N119HQ" |

| "2022-01-15" | "N122HQ" |

| "2022-01-16" | "N412YX" |

pl.col(dtype) selects all columns that match a given type. Useful for applying the same transformation to every column of a type:

# dplyr

full_flights |> select(where(is.double))

full_flights |> select(where(is.integer))full_flights.select(pl.col(pl.Float64)).head(3)| DepDelay | DepDelayMinutes | DepDel15 | TaxiOut | TaxiIn | ArrDelay | ArrDelayMinutes | ArrDel15 | Cancelled | Diverted | CRSElapsedTime | ActualElapsedTime | AirTime | Flights | Distance | CarrierDelay | WeatherDelay | NASDelay | SecurityDelay | LateAircraftDelay | TotalAddGTime | LongestAddGTime |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 |

| -3.0 | 0.0 | 0.0 | 28.0 | 4.0 | 4.0 | 4.0 | 0.0 | 0.0 | 0.0 | 88.0 | 95.0 | 63.0 | 1.0 | 323.0 | null | null | null | null | null | null | null |

| -10.0 | 0.0 | 0.0 | 19.0 | 5.0 | -24.0 | 0.0 | 0.0 | 0.0 | 0.0 | 88.0 | 74.0 | 50.0 | 1.0 | 323.0 | null | null | null | null | null | null | null |

| -6.0 | 0.0 | 0.0 | 16.0 | 12.0 | -13.0 | 0.0 | 0.0 | 0.0 | 0.0 | 88.0 | 81.0 | 53.0 | 1.0 | 323.0 | null | null | null | null | null | null | null |

full_flights.select(pl.col(pl.Int64)).head(3)| Quarter | Month | DayofMonth | DayOfWeek | DOT_ID_Reporting_Airline | Flight_Number_Reporting_Airline | OriginAirportID | OriginAirportSeqID | OriginCityMarketID | OriginStateFips | OriginWac | DestAirportID | DestAirportSeqID | DestCityMarketID | DestStateFips | DestWac | CRSDepTime | DepartureDelayGroups | CRSArrTime | ArrivalDelayGroups | DistanceGroup | DivAirportLandings |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 |

| 1 | 1 | 14 | 5 | 20452 | 4879 | 11066 | 1106606 | 31066 | 39 | 44 | 11278 | 1127805 | 30852 | 51 | 38 | 1224 | -1 | 1352 | 0 | 2 | 0 |

| 1 | 1 | 15 | 6 | 20452 | 4879 | 11066 | 1106606 | 31066 | 39 | 44 | 11278 | 1127805 | 30852 | 51 | 38 | 1224 | -1 | 1352 | -2 | 2 | 0 |

| 1 | 1 | 16 | 7 | 20452 | 4879 | 11066 | 1106606 | 31066 | 39 | 44 | 11278 | 1127805 | 30852 | 51 | 38 | 1224 | -1 | 1352 | -1 | 2 | 0 |

polars.selectors provides a declarative selection API that closely mirrors dplyr’s selection helpers. Import it as cs by convention:

import polars.selectors as csSelect by data type:

# dplyr

full_flights |> select(where(is.numeric))

full_flights |> select(where(is.character))full_flights.select(cs.numeric()).head(3) # all Int*, UInt*, Float* columns| Quarter | Month | DayofMonth | DayOfWeek | DOT_ID_Reporting_Airline | Flight_Number_Reporting_Airline | OriginAirportID | OriginAirportSeqID | OriginCityMarketID | OriginStateFips | OriginWac | DestAirportID | DestAirportSeqID | DestCityMarketID | DestStateFips | DestWac | CRSDepTime | DepDelay | DepDelayMinutes | DepDel15 | DepartureDelayGroups | TaxiOut | TaxiIn | CRSArrTime | ArrDelay | ArrDelayMinutes | ArrDel15 | ArrivalDelayGroups | Cancelled | Diverted | CRSElapsedTime | ActualElapsedTime | AirTime | Flights | Distance | DistanceGroup | CarrierDelay | WeatherDelay | NASDelay | SecurityDelay | LateAircraftDelay | TotalAddGTime | LongestAddGTime | DivAirportLandings |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | f64 | f64 | f64 | i64 | f64 | f64 | i64 | f64 | f64 | f64 | i64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | i64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | i64 |

| 1 | 1 | 14 | 5 | 20452 | 4879 | 11066 | 1106606 | 31066 | 39 | 44 | 11278 | 1127805 | 30852 | 51 | 38 | 1224 | -3.0 | 0.0 | 0.0 | -1 | 28.0 | 4.0 | 1352 | 4.0 | 4.0 | 0.0 | 0 | 0.0 | 0.0 | 88.0 | 95.0 | 63.0 | 1.0 | 323.0 | 2 | null | null | null | null | null | null | null | 0 |

| 1 | 1 | 15 | 6 | 20452 | 4879 | 11066 | 1106606 | 31066 | 39 | 44 | 11278 | 1127805 | 30852 | 51 | 38 | 1224 | -10.0 | 0.0 | 0.0 | -1 | 19.0 | 5.0 | 1352 | -24.0 | 0.0 | 0.0 | -2 | 0.0 | 0.0 | 88.0 | 74.0 | 50.0 | 1.0 | 323.0 | 2 | null | null | null | null | null | null | null | 0 |

| 1 | 1 | 16 | 7 | 20452 | 4879 | 11066 | 1106606 | 31066 | 39 | 44 | 11278 | 1127805 | 30852 | 51 | 38 | 1224 | -6.0 | 0.0 | 0.0 | -1 | 16.0 | 12.0 | 1352 | -13.0 | 0.0 | 0.0 | -1 | 0.0 | 0.0 | 88.0 | 81.0 | 53.0 | 1.0 | 323.0 | 2 | null | null | null | null | null | null | null | 0 |

full_flights.select(cs.string()).head(3) # all String columns| Year | FlightDate | Reporting_Airline | IATA_CODE_Reporting_Airline | Tail_Number | Origin | OriginCityName | OriginState | OriginStateName | Dest | DestCityName | DestState | DestStateName | DepTime | DepTimeBlk | WheelsOff | WheelsOn | ArrTime | ArrTimeBlk | CancellationCode | FirstDepTime | DivReachedDest | DivActualElapsedTime | DivArrDelay | DivDistance | Div1Airport | Div1AirportID | Div1AirportSeqID | Div1WheelsOn | Div1TotalGTime | Div1LongestGTime | Div1WheelsOff | Div1TailNum | Div2Airport | Div2AirportID | Div2AirportSeqID | Div2WheelsOn | Div2TotalGTime | Div2LongestGTime | Div2WheelsOff | Div2TailNum | Div3Airport | Div3AirportID | Div3AirportSeqID | Div3WheelsOn | Div3TotalGTime | Div3LongestGTime | Div3WheelsOff | Div3TailNum | Div4Airport | Div4AirportID | Div4AirportSeqID | Div4WheelsOn | Div4TotalGTime | Div4LongestGTime | Div4WheelsOff | Div4TailNum | Div5Airport | Div5AirportID | Div5AirportSeqID | Div5WheelsOn | Div5TotalGTime | Div5LongestGTime | Div5WheelsOff | Div5TailNum | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str |

| "2022" | "2022-01-14" | "YX" | "YX" | "N119HQ" | "CMH" | "Columbus, OH" | "OH" | "Ohio" | "DCA" | "Washington, DC" | "VA" | "Virginia" | "1221" | "1200-1259" | "1249" | "1352" | "1356" | "1300-1359" | "" | "" | null | null | null | null | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | null |

| "2022" | "2022-01-15" | "YX" | "YX" | "N122HQ" | "CMH" | "Columbus, OH" | "OH" | "Ohio" | "DCA" | "Washington, DC" | "VA" | "Virginia" | "1214" | "1200-1259" | "1233" | "1323" | "1328" | "1300-1359" | "" | "" | null | null | null | null | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | null |

| "2022" | "2022-01-16" | "YX" | "YX" | "N412YX" | "CMH" | "Columbus, OH" | "OH" | "Ohio" | "DCA" | "Washington, DC" | "VA" | "Virginia" | "1218" | "1200-1259" | "1234" | "1327" | "1339" | "1300-1359" | "" | "" | null | null | null | null | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | null |

Select by name pattern:

# dplyr

full_flights |> select(starts_with("Flight"))

full_flights |> select(ends_with("Delay"))full_flights.select(cs.starts_with('Flight')).head(3)| FlightDate | Flight_Number_Reporting_Airline | Flights |

|---|---|---|

| str | i64 | f64 |

| "2022-01-14" | 4879 | 1.0 |

| "2022-01-15" | 4879 | 1.0 |

| "2022-01-16" | 4879 | 1.0 |

full_flights.select(cs.ends_with('Delay')).head(3)| DepDelay | ArrDelay | CarrierDelay | WeatherDelay | NASDelay | SecurityDelay | LateAircraftDelay | DivArrDelay |

|---|---|---|---|---|---|---|---|

| f64 | f64 | f64 | f64 | f64 | f64 | f64 | str |

| -3.0 | 4.0 | null | null | null | null | null | null |

| -10.0 | -24.0 | null | null | null | null | null | null |

| -6.0 | -13.0 | null | null | null | null | null | null |

Combining selectors with set operations:

This is where selectors go beyond what bare pl.col() can express. Selectors support set operations using Python operators, making complex multi-criteria selections readable in a single expression:

| operator | meaning | dplyr equivalent |

|---|---|---|

| |

union — columns matching either selector | c(starts_with(), ...) |

& |

intersection — columns matching both selectors | where(\(x) ... & ...) |

- |

difference — left selector minus right | not available |

~ |

complement — all columns not matching | !selector |

# union: columns starting with "Flight" OR ending with "Delay"

full_flights.select(cs.starts_with('Flight') | cs.ends_with('Delay')).head(3)| FlightDate | Flight_Number_Reporting_Airline | DepDelay | ArrDelay | Flights | CarrierDelay | WeatherDelay | NASDelay | SecurityDelay | LateAircraftDelay | DivArrDelay |

|---|---|---|---|---|---|---|---|---|---|---|

| str | i64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | str |

| "2022-01-14" | 4879 | -3.0 | 4.0 | 1.0 | null | null | null | null | null | null |

| "2022-01-15" | 4879 | -10.0 | -24.0 | 1.0 | null | null | null | null | null | null |

| "2022-01-16" | 4879 | -6.0 | -13.0 | 1.0 | null | null | null | null | null | null |

# difference: numeric columns excluding Int64 (i.e. Float columns only)

full_flights.select(cs.numeric() - cs.integer()).head(3)| DepDelay | DepDelayMinutes | DepDel15 | TaxiOut | TaxiIn | ArrDelay | ArrDelayMinutes | ArrDel15 | Cancelled | Diverted | CRSElapsedTime | ActualElapsedTime | AirTime | Flights | Distance | CarrierDelay | WeatherDelay | NASDelay | SecurityDelay | LateAircraftDelay | TotalAddGTime | LongestAddGTime |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 |

| -3.0 | 0.0 | 0.0 | 28.0 | 4.0 | 4.0 | 4.0 | 0.0 | 0.0 | 0.0 | 88.0 | 95.0 | 63.0 | 1.0 | 323.0 | null | null | null | null | null | null | null |

| -10.0 | 0.0 | 0.0 | 19.0 | 5.0 | -24.0 | 0.0 | 0.0 | 0.0 | 0.0 | 88.0 | 74.0 | 50.0 | 1.0 | 323.0 | null | null | null | null | null | null | null |

| -6.0 | 0.0 | 0.0 | 16.0 | 12.0 | -13.0 | 0.0 | 0.0 | 0.0 | 0.0 | 88.0 | 81.0 | 53.0 | 1.0 | 323.0 | null | null | null | null | null | null | null |

# complement: everything except string columns

full_flights.select(~cs.string()).head(3)| Quarter | Month | DayofMonth | DayOfWeek | DOT_ID_Reporting_Airline | Flight_Number_Reporting_Airline | OriginAirportID | OriginAirportSeqID | OriginCityMarketID | OriginStateFips | OriginWac | DestAirportID | DestAirportSeqID | DestCityMarketID | DestStateFips | DestWac | CRSDepTime | DepDelay | DepDelayMinutes | DepDel15 | DepartureDelayGroups | TaxiOut | TaxiIn | CRSArrTime | ArrDelay | ArrDelayMinutes | ArrDel15 | ArrivalDelayGroups | Cancelled | Diverted | CRSElapsedTime | ActualElapsedTime | AirTime | Flights | Distance | DistanceGroup | CarrierDelay | WeatherDelay | NASDelay | SecurityDelay | LateAircraftDelay | TotalAddGTime | LongestAddGTime | DivAirportLandings |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | f64 | f64 | f64 | i64 | f64 | f64 | i64 | f64 | f64 | f64 | i64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | i64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | i64 |

| 1 | 1 | 14 | 5 | 20452 | 4879 | 11066 | 1106606 | 31066 | 39 | 44 | 11278 | 1127805 | 30852 | 51 | 38 | 1224 | -3.0 | 0.0 | 0.0 | -1 | 28.0 | 4.0 | 1352 | 4.0 | 4.0 | 0.0 | 0 | 0.0 | 0.0 | 88.0 | 95.0 | 63.0 | 1.0 | 323.0 | 2 | null | null | null | null | null | null | null | 0 |

| 1 | 1 | 15 | 6 | 20452 | 4879 | 11066 | 1106606 | 31066 | 39 | 44 | 11278 | 1127805 | 30852 | 51 | 38 | 1224 | -10.0 | 0.0 | 0.0 | -1 | 19.0 | 5.0 | 1352 | -24.0 | 0.0 | 0.0 | -2 | 0.0 | 0.0 | 88.0 | 74.0 | 50.0 | 1.0 | 323.0 | 2 | null | null | null | null | null | null | null | 0 |

| 1 | 1 | 16 | 7 | 20452 | 4879 | 11066 | 1106606 | 31066 | 39 | 44 | 11278 | 1127805 | 30852 | 51 | 38 | 1224 | -6.0 | 0.0 | 0.0 | -1 | 16.0 | 12.0 | 1352 | -13.0 | 0.0 | 0.0 | -1 | 0.0 | 0.0 | 88.0 | 81.0 | 53.0 | 1.0 | 323.0 | 2 | null | null | null | null | null | null | null | 0 |

A full comparison between dplyr and Polars selection features:

| dplyr action | dplyr function | polars selector |

|---|---|---|

| matches all columns | everything() |

cs.all() |

| matches all numeric columns | where(is.numeric) |

cs.numeric() |

| matches all integer columns | where(is.integer) |

cs.integer() |

| matches all float columns | where(is.double) |

cs.float() |

| matches all string columns | where(is.character) |

cs.string() |

| matches all factor/categorical columns | where(is.factor) |

cs.categorical() |

| matches all date columns | where(\(x) class(x) == "Date") |

cs.date() |

| matches all datetime columns | not available | cs.datetime() |

| matches all time columns | not available | cs.time() |

| matches all temporal columns | not available | cs.temporal() |

| select the last column | last_col() |

cs.last() |

| starts with a prefix | starts_with() |

cs.starts_with() |

| ends with a suffix | ends_with() |

cs.ends_with() |

| contains a literal string | contains() |

cs.contains() |

| matches a regular expression | matches() |

cs.matches() |

| complement of a selection | !selector |

~selector |

| difference between selections | not available | cs_a - cs_b |

| range of consecutive columns | col1:col10 |

not available |

| any of a list, ignoring missing names | any_of() |

not available |

Note: All available selector functions can be found here

with_columns() is the Polars equivalent of dplyr’s mutate(). It adds new columns or overwrites existing ones and returns a new DataFrame:

# dplyr

full_flights |>

mutate(

DepDelay_hrs = DepDelay / 60,

IsDelayed = DepDelay > 0

)(

full_flights

.with_columns(

DepDelay_hrs = pl.col('DepDelay') / 60,

IsDelayed = pl.col('DepDelay') > 0

)

.select(['FlightDate', 'DepDelay', 'DepDelay_hrs', 'IsDelayed'])

.head(3)

)| FlightDate | DepDelay | DepDelay_hrs | IsDelayed |

|---|---|---|---|

| str | f64 | f64 | bool |

| "2022-01-14" | -3.0 | -0.05 | false |

| "2022-01-15" | -10.0 | -0.166667 | false |

| "2022-01-16" | -6.0 | -0.1 | false |

Overwriting an existing column uses the same syntax — assigning to a name that already exists replaces it:

# overwrite DepDelay from Float64 to Int32

full_flights.with_columns(

pl.col('DepDelay').cast(pl.Int32)

)['DepDelay'].dtypeInt32A key difference from dplyr is that all expressions inside a single with_columns() call are evaluated in parallel against the original DataFrame. This means you cannot reference a column created earlier in the same call:

# dplyr: works — mutate() evaluates expressions sequentially

full_flights |>

mutate(

DepDelay_hrs = DepDelay / 60,

IsLongDelay = DepDelay_hrs > 2 # references the column just created above

)# Polars: fails — DepDelay_hrs does not exist in the original DataFrame yet

full_flights.with_columns(

DepDelay_hrs = pl.col('DepDelay') / 60,

IsLongDelay = pl.col('DepDelay_hrs') > 2 # ColumnNotFoundError

)The fix is to split into two chained with_columns() calls:

(

full_flights

.with_columns(DepDelay_hrs = pl.col('DepDelay') / 60)

.with_columns(IsLongDelay = pl.col('DepDelay_hrs') > 2)

.select(['FlightDate', 'DepDelay', 'DepDelay_hrs', 'IsLongDelay'])

.head(3)

)| FlightDate | DepDelay | DepDelay_hrs | IsLongDelay |

|---|---|---|---|

| str | f64 | f64 | bool |

| "2022-01-14" | -3.0 | -0.05 | false |

| "2022-01-15" | -10.0 | -0.166667 | false |

| "2022-01-16" | -6.0 | -0.1 | false |

The parallel evaluation is intentional — it allows Polars to optimise multi-column mutations, which is a meaningful performance advantage for wide DataFrames.

.rename() takes a dictionary mapping old names to new names:

# dplyr — note the order: new_name = old_name

full_flights |> rename(departure_delay = DepDelay, arrival_delay = ArrDelay)# Polars dict order is the reverse: old_name -> new_name

(

full_flights

.rename({'DepDelay': 'departure_delay', 'ArrDelay': 'arrival_delay'})

.select(['FlightDate', 'departure_delay', 'arrival_delay'])

.head(3)

)| FlightDate | departure_delay | arrival_delay |

|---|---|---|

| str | f64 | f64 |

| "2022-01-14" | -3.0 | 4.0 |

| "2022-01-15" | -10.0 | -24.0 |

| "2022-01-16" | -6.0 | -13.0 |

Note the reversed convention: dplyr uses new_name = old_name, while Polars maps old_name → new_name.

.drop() removes one or more columns by name:

# dplyr

full_flights |> select(-Year, -Quarter)full_flights.drop(['Year', 'Quarter']).head(3)| Month | DayofMonth | DayOfWeek | FlightDate | Reporting_Airline | DOT_ID_Reporting_Airline | IATA_CODE_Reporting_Airline | Tail_Number | Flight_Number_Reporting_Airline | OriginAirportID | OriginAirportSeqID | OriginCityMarketID | Origin | OriginCityName | OriginState | OriginStateFips | OriginStateName | OriginWac | DestAirportID | DestAirportSeqID | DestCityMarketID | Dest | DestCityName | DestState | DestStateFips | DestStateName | DestWac | CRSDepTime | DepTime | DepDelay | DepDelayMinutes | DepDel15 | DepartureDelayGroups | DepTimeBlk | TaxiOut | WheelsOff | WheelsOn | … | Div1TotalGTime | Div1LongestGTime | Div1WheelsOff | Div1TailNum | Div2Airport | Div2AirportID | Div2AirportSeqID | Div2WheelsOn | Div2TotalGTime | Div2LongestGTime | Div2WheelsOff | Div2TailNum | Div3Airport | Div3AirportID | Div3AirportSeqID | Div3WheelsOn | Div3TotalGTime | Div3LongestGTime | Div3WheelsOff | Div3TailNum | Div4Airport | Div4AirportID | Div4AirportSeqID | Div4WheelsOn | Div4TotalGTime | Div4LongestGTime | Div4WheelsOff | Div4TailNum | Div5Airport | Div5AirportID | Div5AirportSeqID | Div5WheelsOn | Div5TotalGTime | Div5LongestGTime | Div5WheelsOff | Div5TailNum | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| i64 | i64 | i64 | str | str | i64 | str | str | i64 | i64 | i64 | i64 | str | str | str | i64 | str | i64 | i64 | i64 | i64 | str | str | str | i64 | str | i64 | i64 | str | f64 | f64 | f64 | i64 | str | f64 | str | str | … | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str |

| 1 | 14 | 5 | "2022-01-14" | "YX" | 20452 | "YX" | "N119HQ" | 4879 | 11066 | 1106606 | 31066 | "CMH" | "Columbus, OH" | "OH" | 39 | "Ohio" | 44 | 11278 | 1127805 | 30852 | "DCA" | "Washington, DC" | "VA" | 51 | "Virginia" | 38 | 1224 | "1221" | -3.0 | 0.0 | 0.0 | -1 | "1200-1259" | 28.0 | "1249" | "1352" | … | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | null |

| 1 | 15 | 6 | "2022-01-15" | "YX" | 20452 | "YX" | "N122HQ" | 4879 | 11066 | 1106606 | 31066 | "CMH" | "Columbus, OH" | "OH" | 39 | "Ohio" | 44 | 11278 | 1127805 | 30852 | "DCA" | "Washington, DC" | "VA" | 51 | "Virginia" | 38 | 1224 | "1214" | -10.0 | 0.0 | 0.0 | -1 | "1200-1259" | 19.0 | "1233" | "1323" | … | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | null |

| 1 | 16 | 7 | "2022-01-16" | "YX" | 20452 | "YX" | "N412YX" | 4879 | 11066 | 1106606 | 31066 | "CMH" | "Columbus, OH" | "OH" | 39 | "Ohio" | 44 | 11278 | 1127805 | 30852 | "DCA" | "Washington, DC" | "VA" | 51 | "Virginia" | 38 | 1224 | "1218" | -6.0 | 0.0 | 0.0 | -1 | "1200-1259" | 16.0 | "1234" | "1327" | … | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | null |

To remove columns by type or name pattern, use .select() with a selector complement instead:

# remove all string columns

full_flights.select(~cs.string()).head(3)| Quarter | Month | DayofMonth | DayOfWeek | DOT_ID_Reporting_Airline | Flight_Number_Reporting_Airline | OriginAirportID | OriginAirportSeqID | OriginCityMarketID | OriginStateFips | OriginWac | DestAirportID | DestAirportSeqID | DestCityMarketID | DestStateFips | DestWac | CRSDepTime | DepDelay | DepDelayMinutes | DepDel15 | DepartureDelayGroups | TaxiOut | TaxiIn | CRSArrTime | ArrDelay | ArrDelayMinutes | ArrDel15 | ArrivalDelayGroups | Cancelled | Diverted | CRSElapsedTime | ActualElapsedTime | AirTime | Flights | Distance | DistanceGroup | CarrierDelay | WeatherDelay | NASDelay | SecurityDelay | LateAircraftDelay | TotalAddGTime | LongestAddGTime | DivAirportLandings |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | i64 | f64 | f64 | f64 | i64 | f64 | f64 | i64 | f64 | f64 | f64 | i64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | i64 | f64 | f64 | f64 | f64 | f64 | f64 | f64 | i64 |

| 1 | 1 | 14 | 5 | 20452 | 4879 | 11066 | 1106606 | 31066 | 39 | 44 | 11278 | 1127805 | 30852 | 51 | 38 | 1224 | -3.0 | 0.0 | 0.0 | -1 | 28.0 | 4.0 | 1352 | 4.0 | 4.0 | 0.0 | 0 | 0.0 | 0.0 | 88.0 | 95.0 | 63.0 | 1.0 | 323.0 | 2 | null | null | null | null | null | null | null | 0 |

| 1 | 1 | 15 | 6 | 20452 | 4879 | 11066 | 1106606 | 31066 | 39 | 44 | 11278 | 1127805 | 30852 | 51 | 38 | 1224 | -10.0 | 0.0 | 0.0 | -1 | 19.0 | 5.0 | 1352 | -24.0 | 0.0 | 0.0 | -2 | 0.0 | 0.0 | 88.0 | 74.0 | 50.0 | 1.0 | 323.0 | 2 | null | null | null | null | null | null | null | 0 |

| 1 | 1 | 16 | 7 | 20452 | 4879 | 11066 | 1106606 | 31066 | 39 | 44 | 11278 | 1127805 | 30852 | 51 | 38 | 1224 | -6.0 | 0.0 | 0.0 | -1 | 16.0 | 12.0 | 1352 | -13.0 | 0.0 | 0.0 | -1 | 0.0 | 0.0 | 88.0 | 81.0 | 53.0 | 1.0 | 323.0 | 2 | null | null | null | null | null | null | null | 0 |

Most analytical questions about groups of data follow a common pattern: divide the data into subsets based on one or more keys, apply a function to each subset independently, and reassemble the results. Wickham (2011) formalised this as the split-apply-combine strategy — the conceptual backbone behind group_by() in dplyr, Polars’ group_by() context, and pandas’ groupby().

The strategy has three steps:

grouped_df vs GroupBydplyr and Polars implement the split-apply-combine strategy differently, and understanding the difference prevents a whole class of subtle bugs.

dplyr wraps the data frame in a grouped_df object. The groups are sticky — they persist across the pipe and silently alter the behaviour of any subsequent verb until you call ungroup():

by_carrier <- full_flights |> group_by(Reporting_Airline)

class(by_carrier)

# "grouped_df" "tbl_df" "tbl" "data.frame"

by_carrier |> summarise(n = n(), mean_delay = mean(DepDelay, na.rm = TRUE))

by_carrier |> ungroup() # explicitly remove grouping when donePolars returns a GroupBy object from group_by() — not a DataFrame. The primary operation on a GroupBy is .agg(), which accepts one or more aggregating expressions. The object also exposes:

.len(), .sum(), .mean(), .min(), .max(), .first(), .last(), and others — each equivalent to calling .agg() with the corresponding expression.map_groups(fn) — applies a custom Python function to each group’s sub-DataFrame and concatenates the results; useful when the transformation cannot be expressed as a Polars expression.having(*predicates) — filters groups by a predicate before aggregation, equivalent to SQL’s HAVING clauseThere is no sticky state: each group_by() call is self-contained:

type(full_flights.group_by('Reporting_Airline'))polars.dataframe.group_by.GroupByThis design eliminates the class of bugs where a forgotten ungroup() causes downstream verbs to silently operate group-by-group. In Polars, grouping context is always explicit and local to a single expression.

The fundamental pattern in Polars is group_by() followed by .agg(). Multiple aggregations are passed as comma-separated keyword arguments:

# dplyr

full_flights |>

group_by(Reporting_Airline) |>

summarise(

n_flights = n(),

mean_delay = mean(DepDelay, na.rm = TRUE),

max_delay = max(DepDelay, na.rm = TRUE)

)(

full_flights

.group_by('Reporting_Airline')

.agg(

n_flights = pl.len(),

mean_delay = pl.col('DepDelay').mean(),

max_delay = pl.col('DepDelay').max()

)

.sort('Reporting_Airline')

)| Reporting_Airline | n_flights | mean_delay | max_delay |

|---|---|---|---|

| str | u32 | f64 | f64 |

| "9E" | 21644 | 8.163106 | 1111.0 |

| "AA" | 69400 | 7.928395 | 2512.0 |

| "AS" | 16549 | 8.076828 | 826.0 |

| "B6" | 21332 | 23.401837 | 1661.0 |

| "DL" | 68963 | 7.864645 | 1164.0 |

| … | … | … | … |

| "QX" | 8105 | 12.158578 | 530.0 |

| "UA" | 45741 | 13.073434 | 1436.0 |

| "WN" | 97436 | 10.244393 | 479.0 |

| "YV" | 11404 | 18.966965 | 1224.0 |

| "YX" | 27261 | 6.944988 | 1282.0 |

pl.len() counts rows in each group, equivalent to dplyr’s n(). The .sort() call is necessary because group_by() in Polars does not guarantee output order — dplyr’s summarise() preserves group key order by default.

To group by multiple columns, pass a list:

# dplyr

full_flights |>

group_by(Reporting_Airline, OriginStateName) |>

summarise(n_flights = n(), mean_delay = mean(DepDelay, na.rm = TRUE))(

full_flights

.group_by(['Reporting_Airline', 'OriginStateName'])

.agg(

n_flights = pl.len(),

mean_delay = pl.col('DepDelay').mean()

)

.sort(['Reporting_Airline', 'OriginStateName'])

.head(10)

)| Reporting_Airline | OriginStateName | n_flights | mean_delay |

|---|---|---|---|

| str | str | u32 | f64 |

| "9E" | "Alabama" | 391 | 4.720627 |

| "9E" | "Arkansas" | 91 | 0.735632 |

| "9E" | "Florida" | 138 | 15.873134 |

| "9E" | "Georgia" | 3931 | 5.657929 |

| "9E" | "Illinois" | 420 | 8.204938 |

| "9E" | "Indiana" | 405 | 6.776923 |

| "9E" | "Iowa" | 398 | 9.82398 |

| "9E" | "Kansas" | 7 | 3.0 |

| "9E" | "Kentucky" | 878 | 7.736277 |

| "9E" | "Louisiana" | 387 | 6.656085 |

Polars also lets you apply the same aggregation to multiple columns in a single expression using a multi-column selector and .name.suffix() to auto-generate output names — a feature with no direct dplyr equivalent:

# compute mean of all three delay-component columns in one expression

(

full_flights

.group_by('Reporting_Airline')

.agg(

pl.col('DepDelay', 'ArrDelay', 'CarrierDelay').mean().name.suffix('_mean')

)

.sort('Reporting_Airline')

.head(5)

)| Reporting_Airline | DepDelay_mean | ArrDelay_mean | CarrierDelay_mean |

|---|---|---|---|

| str | f64 | f64 | f64 |

| "9E" | 8.163106 | 1.984254 | 28.540738 |

| "AA" | 7.928395 | -1.075832 | 34.993137 |

| "AS" | 8.076828 | 5.233294 | 21.212693 |

| "B6" | 23.401837 | 18.721082 | 40.458421 |

| "DL" | 7.864645 | -0.228486 | 34.6591 |

One of dplyr’s defining features is that the same verb produces different output depending on whether grouping is active. The three most important cases are summarise(), mutate(), and filter().

summarise() reduces each group to one row — the familiar aggregation pattern shown above. Polars’ group_by().agg() is the direct equivalent.

Grouped mutate() computes within-group window statistics, keeping all rows intact:

# dplyr: add each carrier's average delay back to every flight row

full_flights |>

group_by(Reporting_Airline) |>

mutate(carrier_avg_delay = mean(DepDelay, na.rm = TRUE))

# → same number of rows; new column has the group mean repeated per rowPolars handles this with the .over() window function applied inside .with_columns(). Rather than grouping the DataFrame, .over() computes a group-level aggregate and broadcasts the result back to each row:

(

full_flights

.with_columns(

carrier_avg_delay = pl.col('DepDelay').mean().over('Reporting_Airline')

)

.select(['FlightDate', 'Reporting_Airline', 'DepDelay', 'carrier_avg_delay'])

.head(5)

)| FlightDate | Reporting_Airline | DepDelay | carrier_avg_delay |

|---|---|---|---|

| str | str | f64 | f64 |

| "2022-01-14" | "YX" | -3.0 | 6.944988 |

| "2022-01-15" | "YX" | -10.0 | 6.944988 |

| "2022-01-16" | "YX" | -6.0 | 6.944988 |

| "2022-01-17" | "YX" | -7.0 | 6.944988 |

| "2022-01-18" | "YX" | -6.0 | 6.944988 |

.over() makes the window function intent visible in the expression itself: you can see at a glance that this is a within-group broadcast, not a reduction.

Grouped filter() allows filtering by group-level conditions:

# dplyr: keep only carriers that operated more than 10,000 flights

full_flights |>

group_by(Reporting_Airline) |>

filter(n() > 10000)Polars achieves the same with .over() inside .filter():

full_flights.filter(

pl.len().over('Reporting_Airline') > 10_000

)| Year | Quarter | Month | DayofMonth | DayOfWeek | FlightDate | Reporting_Airline | DOT_ID_Reporting_Airline | IATA_CODE_Reporting_Airline | Tail_Number | Flight_Number_Reporting_Airline | OriginAirportID | OriginAirportSeqID | OriginCityMarketID | Origin | OriginCityName | OriginState | OriginStateFips | OriginStateName | OriginWac | DestAirportID | DestAirportSeqID | DestCityMarketID | Dest | DestCityName | DestState | DestStateFips | DestStateName | DestWac | CRSDepTime | DepTime | DepDelay | DepDelayMinutes | DepDel15 | DepartureDelayGroups | DepTimeBlk | TaxiOut | … | Div1TotalGTime | Div1LongestGTime | Div1WheelsOff | Div1TailNum | Div2Airport | Div2AirportID | Div2AirportSeqID | Div2WheelsOn | Div2TotalGTime | Div2LongestGTime | Div2WheelsOff | Div2TailNum | Div3Airport | Div3AirportID | Div3AirportSeqID | Div3WheelsOn | Div3TotalGTime | Div3LongestGTime | Div3WheelsOff | Div3TailNum | Div4Airport | Div4AirportID | Div4AirportSeqID | Div4WheelsOn | Div4TotalGTime | Div4LongestGTime | Div4WheelsOff | Div4TailNum | Div5Airport | Div5AirportID | Div5AirportSeqID | Div5WheelsOn | Div5TotalGTime | Div5LongestGTime | Div5WheelsOff | Div5TailNum | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| str | i64 | i64 | i64 | i64 | str | str | i64 | str | str | i64 | i64 | i64 | i64 | str | str | str | i64 | str | i64 | i64 | i64 | i64 | str | str | str | i64 | str | i64 | i64 | str | f64 | f64 | f64 | i64 | str | f64 | … | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str | str |

| "2022" | 1 | 1 | 14 | 5 | "2022-01-14" | "YX" | 20452 | "YX" | "N119HQ" | 4879 | 11066 | 1106606 | 31066 | "CMH" | "Columbus, OH" | "OH" | 39 | "Ohio" | 44 | 11278 | 1127805 | 30852 | "DCA" | "Washington, DC" | "VA" | 51 | "Virginia" | 38 | 1224 | "1221" | -3.0 | 0.0 | 0.0 | -1 | "1200-1259" | 28.0 | … | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | null |

| "2022" | 1 | 1 | 15 | 6 | "2022-01-15" | "YX" | 20452 | "YX" | "N122HQ" | 4879 | 11066 | 1106606 | 31066 | "CMH" | "Columbus, OH" | "OH" | 39 | "Ohio" | 44 | 11278 | 1127805 | 30852 | "DCA" | "Washington, DC" | "VA" | 51 | "Virginia" | 38 | 1224 | "1214" | -10.0 | 0.0 | 0.0 | -1 | "1200-1259" | 19.0 | … | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | null |

| "2022" | 1 | 1 | 16 | 7 | "2022-01-16" | "YX" | 20452 | "YX" | "N412YX" | 4879 | 11066 | 1106606 | 31066 | "CMH" | "Columbus, OH" | "OH" | 39 | "Ohio" | 44 | 11278 | 1127805 | 30852 | "DCA" | "Washington, DC" | "VA" | 51 | "Virginia" | 38 | 1224 | "1218" | -6.0 | 0.0 | 0.0 | -1 | "1200-1259" | 16.0 | … | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | null |

| "2022" | 1 | 1 | 17 | 1 | "2022-01-17" | "YX" | 20452 | "YX" | "N405YX" | 4879 | 11066 | 1106606 | 31066 | "CMH" | "Columbus, OH" | "OH" | 39 | "Ohio" | 44 | 11278 | 1127805 | 30852 | "DCA" | "Washington, DC" | "VA" | 51 | "Virginia" | 38 | 1224 | "1217" | -7.0 | 0.0 | 0.0 | -1 | "1200-1259" | 32.0 | … | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | null |

| "2022" | 1 | 1 | 18 | 2 | "2022-01-18" | "YX" | 20452 | "YX" | "N420YX" | 4879 | 11066 | 1106606 | 31066 | "CMH" | "Columbus, OH" | "OH" | 39 | "Ohio" | 44 | 11278 | 1127805 | 30852 | "DCA" | "Washington, DC" | "VA" | 51 | "Virginia" | 38 | 1224 | "1218" | -6.0 | 0.0 | 0.0 | -1 | "1200-1259" | 11.0 | … | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | null |

| … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … |

| "2022" | 1 | 1 | 6 | 4 | "2022-01-06" | "DL" | 19790 | "DL" | "N101DQ" | 1576 | 11042 | 1104205 | 30647 | "CLE" | "Cleveland, OH" | "OH" | 39 | "Ohio" | 44 | 10397 | 1039707 | 30397 | "ATL" | "Atlanta, GA" | "GA" | 13 | "Georgia" | 34 | 1355 | "1413" | 18.0 | 18.0 | 1.0 | 1 | "1300-1359" | 9.0 | … | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | null |

| "2022" | 1 | 1 | 6 | 4 | "2022-01-06" | "DL" | 19790 | "DL" | "N883DN" | 1577 | 11433 | 1143302 | 31295 | "DTW" | "Detroit, MI" | "MI" | 26 | "Michigan" | 43 | 13303 | 1330303 | 32467 | "MIA" | "Miami, FL" | "FL" | 12 | "Florida" | 33 | 1422 | "1626" | 124.0 | 124.0 | 1.0 | 8 | "1400-1459" | 12.0 | … | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | null |

| "2022" | 1 | 1 | 6 | 4 | "2022-01-06" | "DL" | 19790 | "DL" | "N831DN" | 1578 | 10821 | 1082106 | 30852 | "BWI" | "Baltimore, MD" | "MD" | 24 | "Maryland" | 35 | 10397 | 1039707 | 30397 | "ATL" | "Atlanta, GA" | "GA" | 13 | "Georgia" | 34 | 1329 | "1343" | 14.0 | 14.0 | 0.0 | 0 | "1300-1359" | 18.0 | … | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | null |

| "2022" | 1 | 1 | 6 | 4 | "2022-01-06" | "DL" | 19790 | "DL" | "N989AT" | 1579 | 11057 | 1105703 | 31057 | "CLT" | "Charlotte, NC" | "NC" | 37 | "North Carolina" | 36 | 10397 | 1039707 | 30397 | "ATL" | "Atlanta, GA" | "GA" | 13 | "Georgia" | 34 | 1258 | "1257" | -1.0 | 0.0 | 0.0 | -1 | "1200-1259" | 15.0 | … | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | null |

| "2022" | 1 | 1 | 6 | 4 | "2022-01-06" | "DL" | 19790 | "DL" | "N815DN" | 1580 | 14869 | 1486903 | 34614 | "SLC" | "Salt Lake City, UT" | "UT" | 49 | "Utah" | 87 | 14057 | 1405702 | 34057 | "PDX" | "Portland, OR" | "OR" | 41 | "Oregon" | 92 | 2240 | "2231" | -9.0 | 0.0 | 0.0 | -1 | "2200-2259" | 10.0 | … | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | "" | null | null | "" | null | null | "" | "" | null |

dplyr 1.1.0 introduced the .by argument as an inline alternative to the group_by() + ungroup() pattern. It scopes the grouping to a single verb and always returns an ungrouped result:

# equivalent to group_by(Reporting_Airline) |> summarise(...) |> ungroup()

full_flights |>

summarise(

mean_delay = mean(DepDelay, na.rm = TRUE),

.by = Reporting_Airline

)Because Polars group_by() never produces sticky groups, every group_by() call in Polars is already per-operation by definition — there is no accumulated state to unwind. The closest parallel to .by’s ordering guarantee is maintain_order=True:

(

full_flights

.group_by('Reporting_Airline', maintain_order=True)

.agg(mean_delay = pl.col('DepDelay').mean())

)| Reporting_Airline | mean_delay |

|---|---|

| str | f64 |

| "YX" | 6.944988 |

| "OO" | 14.785588 |

| "UA" | 13.073434 |

| "YV" | 18.966965 |

| "DL" | 7.864645 |

| … | … |

| "WN" | 10.244393 |

| "9E" | 8.163106 |

| "AA" | 7.928395 |

| "AS" | 8.076828 |

| "B6" | 23.401837 |

The aggregations above reduce groups of rows to summary values. Row-wise aggregations work in the opposite direction: they aggregate across columns for each individual row — computing, for example, the total delay breakdown per flight.

dplyr handles this with rowwise(), which treats each row as its own single-row group:

# dplyr: rowwise sum of five delay-breakdown columns

full_flights |>

rowwise() |>

mutate(total_breakdown = sum(

c_across(c(CarrierDelay, WeatherDelay, NASDelay, SecurityDelay, LateAircraftDelay)),

na.rm = TRUE

))rowwise() is conceptually clear but slow — it iterates row by row. Polars avoids the problem entirely with horizontal aggregation functions that operate across a set of columns in a single vectorised pass:

dplyr rowwise() + c_across() |

Polars horizontal function |

|---|---|

sum(...) |

pl.sum_horizontal(...) |

mean(...) |

pl.mean_horizontal(...) |

min(...) |

pl.min_horizontal(...) |

max(...) |

pl.max_horizontal(...) |

any(...) |

pl.any_horizontal(...) |

all(...) |

pl.all_horizontal(...) |

(

full_flights

.with_columns(

total_breakdown = pl.sum_horizontal(

'CarrierDelay', 'WeatherDelay', 'NASDelay', 'SecurityDelay', 'LateAircraftDelay'

)

)

.select(['FlightDate', 'Reporting_Airline', 'DepDelay', 'total_breakdown'])

.head(5)

)| FlightDate | Reporting_Airline | DepDelay | total_breakdown |

|---|---|---|---|

| str | str | f64 | f64 |

| "2022-01-14" | "YX" | -3.0 | 0.0 |

| "2022-01-15" | "YX" | -10.0 | 0.0 |

| "2022-01-16" | "YX" | -6.0 | 0.0 |

| "2022-01-17" | "YX" | -7.0 | 0.0 |

| "2022-01-18" | "YX" | -6.0 | 0.0 |

Nulls are automatically excluded from horizontal aggregations, so there is no na.rm = TRUE equivalent needed.

Standard group_by() groups by the distinct values of a categorical column. Time-series analysis often requires a different kind of grouping: partitioning a continuous time axis into fixed-width intervals (e.g., all rows in the same calendar week) or computing a statistic over a sliding window anchored to each row’s position.

Polars provides two dedicated contexts for this: group_by_dynamic() and rolling(). Neither is available natively in dplyr — R users typically reach for {tsibble} and {slider} for equivalent operations. pandas offers resample() and .rolling() with broadly similar semantics, but Polars integrates both into its lazy execution engine and supports additional grouping keys alongside the time dimension.

group_by_dynamic() partitions a sorted time column into equal-width buckets and aggregates each bucket. The data must be sorted by the index column before calling it:

flights_ts = (

full_flights

.with_columns(pl.col('FlightDate').str.to_date('%Y-%m-%d'))

.sort('FlightDate')

)

(

flights_ts

.group_by_dynamic('FlightDate', every='1w')

.agg(

n_flights = pl.len(),

mean_delay = pl.col('DepDelay').mean()

)

)| FlightDate | n_flights | mean_delay |

|---|---|---|

| date | u32 | f64 |

| 2021-12-27 | 34910 | 33.273963 |

| 2022-01-03 | 122980 | 20.238917 |

| 2022-01-10 | 119410 | 5.123972 |

| 2022-01-17 | 121315 | 6.780405 |

| 2022-01-24 | 121236 | 6.790272 |

| 2022-01-31 | 18051 | 6.364568 |

The result contains one row per bucket; the FlightDate column holds the left boundary of each interval. Common every values: '1d' (daily), '1w' (weekly), '1mo' (monthly), '1y' (yearly).

Adding group_by= produces a separate time series per value of a categorical variable, combining dynamic grouping with ordinary grouping in one call:

(

flights_ts

.group_by_dynamic('FlightDate', every='1w', group_by='Reporting_Airline')

.agg(

n_flights = pl.len(),

mean_delay = pl.col('DepDelay').mean()

)

.sort(['Reporting_Airline', 'FlightDate'])

.head(10)

)| Reporting_Airline | FlightDate | n_flights | mean_delay |

|---|---|---|---|

| str | date | u32 | f64 |

| "9E" | 2021-12-27 | 1321 | 26.522825 |

| "9E" | 2022-01-03 | 4835 | 13.19467 |

| "9E" | 2022-01-10 | 4744 | 3.320499 |

| "9E" | 2022-01-17 | 4937 | 6.373097 |

| "9E" | 2022-01-24 | 5022 | 6.5 |

| "9E" | 2022-01-31 | 785 | 1.534045 |

| "AA" | 2021-12-27 | 4249 | 21.073306 |

| "AA" | 2022-01-03 | 15766 | 11.562363 |

| "AA" | 2022-01-10 | 15674 | 5.582257 |

| "AA" | 2022-01-17 | 15794 | 6.72687 |

rolling() computes aggregations over a sliding time window. For each row, it looks back period in time from the row’s index value and aggregates all rows that fall within that window — producing one result per input row:

# aggregate to daily level first, then apply a 7-day rolling window

daily_flights = (

flights_ts

.group_by('FlightDate')

.agg(

n_flights = pl.len(),

mean_delay = pl.col('DepDelay').mean()

)

.sort('FlightDate')

)

(

daily_flights

.rolling('FlightDate', period='7d')

.agg(

rolling_flights = pl.col('n_flights').sum(),

rolling_mean_delay = pl.col('mean_delay').mean()

)

)| FlightDate | rolling_flights | rolling_mean_delay |

|---|---|---|

| date | u32 | f64 |

| 2022-01-01 | 15852 | 28.465361 |

| 2022-01-02 | 34910 | 32.830963 |

| 2022-01-03 | 54126 | 32.718238 |

| 2022-01-04 | 71091 | 30.785751 |

| 2022-01-05 | 87954 | 28.408098 |

| … | … | … |

| 2022-01-27 | 121202 | 5.648582 |

| 2022-01-28 | 121204 | 6.863473 |

| 2022-01-29 | 121223 | 6.736217 |

| 2022-01-30 | 121236 | 6.703149 |

| 2022-01-31 | 121233 | 6.475201 |

The key distinction between the two contexts:

group_by_dynamic() |

rolling() |

|

|---|---|---|

| Output rows | One per bucket | One per input row |

| Window type | Fixed interval buckets | Sliding window per row |

| Use case | Time-based summaries (weekly totals) | Moving averages, cumulative stats |

Sorting in Polars maps directly to dplyr’s arrange(). The key differences are syntax and a small set of additional options that Polars exposes for controlling null placement and sort stability.

# dplyr — ascending by default

full_flights |> arrange(DepDelay)full_flights.sort('DepDelay').select(['FlightDate', 'Origin', 'Dest', 'DepDelay']).head(5)| FlightDate | Origin | Dest | DepDelay |

|---|---|---|---|

| str | str | str | f64 |

| "2022-01-04" | "DCA" | "CMH" | null |

| "2022-01-03" | "DCA" | "MEM" | null |

| "2022-01-29" | "ATL" | "LGA" | null |

| "2022-01-03" | "DCA" | "PWM" | null |

| "2022-01-03" | "PWM" | "DCA" | null |

dplyr wraps the column name in desc(). Polars uses the descending= argument, which accepts either a single boolean or a list of booleans when sorting by multiple columns:

# dplyr

full_flights |> arrange(desc(DepDelay))full_flights.sort('DepDelay', descending=True).select(['FlightDate', 'Origin', 'Dest', 'DepDelay']).head(5)| FlightDate | Origin | Dest | DepDelay |

|---|---|---|---|

| str | str | str | f64 |

| "2022-01-04" | "DCA" | "CMH" | null |

| "2022-01-03" | "DCA" | "MEM" | null |

| "2022-01-29" | "ATL" | "LGA" | null |

| "2022-01-03" | "DCA" | "PWM" | null |

| "2022-01-03" | "PWM" | "DCA" | null |

Pass a list of column names and a matching list of descending= flags:

# dplyr — sort by carrier ascending, then by departure delay descending

full_flights |> arrange(Reporting_Airline, desc(DepDelay))(

full_flights

.sort(['Reporting_Airline', 'DepDelay'], descending=[False, True])

.select(['Reporting_Airline', 'FlightDate', 'Origin', 'Dest', 'DepDelay'])

.head(10)

)| Reporting_Airline | FlightDate | Origin | Dest | DepDelay |

|---|---|---|---|---|

| str | str | str | str | f64 |

| "9E" | "2022-01-01" | "ICT" | "MSP" | null |

| "9E" | "2022-01-02" | "ATL" | "RST" | null |

| "9E" | "2022-01-03" | "ATW" | "MSP" | null |

| "9E" | "2022-01-03" | "PIT" | "LGA" | null |

| "9E" | "2022-01-17" | "CVG" | "DTW" | null |

| "9E" | "2022-01-03" | "LGA" | "PIT" | null |

| "9E" | "2022-01-29" | "CHS" | "JFK" | null |

| "9E" | "2022-01-02" | "ATL" | "MLU" | null |

| "9E" | "2022-01-07" | "LGA" | "XNA" | null |

| "9E" | "2022-01-16" | "VLD" | "ATL" | null |

dplyr follows R’s default: NA values sort to the end regardless of direction. Polars gives you explicit control via nulls_last=:

nulls_last=True — nulls appear at the bottom (matches R’s default)nulls_last=False — nulls appear at the top# nulls sorted to the top (Polars default)

full_flights.sort('DepDelay').select(['FlightDate', 'DepDelay']).head(5)| FlightDate | DepDelay |

|---|---|

| str | f64 |

| "2022-01-04" | null |

| "2022-01-03" | null |

| "2022-01-29" | null |

| "2022-01-03" | null |

| "2022-01-03" | null |

# nulls sorted to the bottom — matches R / dplyr behaviour

full_flights.sort('DepDelay', nulls_last=True).select(['FlightDate', 'DepDelay']).head(5)| FlightDate | DepDelay |

|---|---|

| str | f64 |

| "2022-01-03" | -52.0 |

| "2022-01-22" | -49.0 |

| "2022-01-23" | -41.0 |

| "2022-01-27" | -40.0 |

| "2022-01-22" | -40.0 |

By default, Polars uses an unstable sort, which does not guarantee the relative order of rows with equal sort keys. For most analytical tasks this is irrelevant and the unstable sort is faster. When reproducibility of tie-breaking matters — for example, when the output order is semantically significant — pass maintain_order=True:

full_flights.sort('Reporting_Airline', maintain_order=True).select(['Reporting_Airline', 'FlightDate']).head(5)| Reporting_Airline | FlightDate |

|---|---|

| str | str |

| "9E" | "2022-01-02" |

| "9E" | "2022-01-03" |

| "9E" | "2022-01-04" |

| "9E" | "2022-01-01" |

| "9E" | "2022-01-05" |

A comparison of the main options:

| task | dplyr | Polars |

|---|---|---|

| sort ascending | arrange(col) |

.sort('col') |

| sort descending | arrange(desc(col)) |

.sort('col', descending=True) |

| sort by multiple columns | arrange(col1, desc(col2)) |

.sort(['col1', 'col2'], descending=[False, True]) |

| nulls last | default behaviour | nulls_last=True |

| stable sort | default behaviour | maintain_order=True |

Joins combine two DataFrames by matching rows on one or more key columns. The table below is a quick reference for readers coming from dplyr:

| Category | Join type | dplyr | Polars |

|---|---|---|---|

| Equi joins | left | left_join(x, y) |

x.join(y, how='left') |

| right | right_join(x, y) |

x.join(y, how='right') |

|

| full | full_join(x, y) |

x.join(y, how='full') |

|

| inner | inner_join(x, y) |

x.join(y, how='inner') |

|

| semi | semi_join(x, y) |

x.join(y, how='semi') |

|

| anti | anti_join(x, y) |

x.join(y, how='anti') |

|

| Non-equi joins | cross | cross_join(x, y) |

x.join(y, how='cross') |

| inequality | inner_join(x, y, join_by(a >= b)) |

x.join_where(y, pl.col('a') >= pl.col('b')) |

|

| asof/rolling | left_join(x, y, join_by(closest(a <= b))) |

x.join_asof(y, ...) |

|

| overlap | inner_join(x, y, join_by(overlaps(...))) |

not available |

To illustrate joins, we use a small airline name lookup table alongside full_flights:

airlines = pl.DataFrame({

'carrier': ['AA', 'UA', 'DL', 'WN', 'B6', 'AS', 'NK', 'F9', 'G4', 'HA',

'YX', '9E', 'EV', 'MQ', 'OH', 'OO'],

'name': [

'American Airlines', 'United Airlines', 'Delta Air Lines',

'Southwest Airlines', 'JetBlue Airways', 'Alaska Airlines',

'Spirit Airlines', 'Frontier Airlines', 'Allegiant Air', 'Hawaiian Airlines',

'Republic Airways', 'Endeavor Air', 'ExpressJet Airlines', 'Envoy Air',

'PSA Airlines', 'SkyWest Airlines'

]

})Mutating joins add columns from the right table to the left, matching on shared keys. The four types differ only in how they handle unmatched rows:

nullnullnulldplyr provides a separate function for each join type; Polars uses a single .join() with a how= argument.

Left join — the most common in practice. Keeps every left row and fills unmatched right-table columns with null. When the key columns have different names in the two tables, use left_on= / right_on= in Polars and join_by(left_key == right_key) in dplyr:

# dplyr

full_flights |>

left_join(airlines, join_by(Reporting_Airline == carrier)) |>

select(FlightDate, Reporting_Airline, name, Origin, DepDelay)(

full_flights

.join(airlines, left_on='Reporting_Airline', right_on='carrier', how='left')

.select(['FlightDate', 'Reporting_Airline', 'name', 'Origin', 'DepDelay'])

.head(5)

)| FlightDate | Reporting_Airline | name | Origin | DepDelay |

|---|---|---|---|---|

| str | str | str | str | f64 |

| "2022-01-14" | "YX" | "Republic Airways" | "CMH" | -3.0 |

| "2022-01-15" | "YX" | "Republic Airways" | "CMH" | -10.0 |

| "2022-01-16" | "YX" | "Republic Airways" | "CMH" | -6.0 |

| "2022-01-17" | "YX" | "Republic Airways" | "CMH" | -7.0 |

| "2022-01-18" | "YX" | "Republic Airways" | "CMH" | -6.0 |

When key column names are identical in both tables, use on= (Polars) or a single-argument join_by() (dplyr):

# dplyr: single argument when names match

flights |> left_join(airlines, join_by(carrier))# Polars: on= when key names are identical

flights.join(airlines, on='carrier', how='left')Inner join — discards rows with no match in either table:

# dplyr

full_flights |>

inner_join(airlines, join_by(Reporting_Airline == carrier))(

full_flights

.join(airlines, left_on='Reporting_Airline', right_on='carrier', how='inner')

.select(['FlightDate', 'Reporting_Airline', 'name', 'Origin', 'DepDelay'])

.head(5)

)| FlightDate | Reporting_Airline | name | Origin | DepDelay |

|---|---|---|---|---|

| str | str | str | str | f64 |

| "2022-01-14" | "YX" | "Republic Airways" | "CMH" | -3.0 |

| "2022-01-15" | "YX" | "Republic Airways" | "CMH" | -10.0 |

| "2022-01-16" | "YX" | "Republic Airways" | "CMH" | -6.0 |

| "2022-01-17" | "YX" | "Republic Airways" | "CMH" | -7.0 |

| "2022-01-18" | "YX" | "Republic Airways" | "CMH" | -6.0 |

When both tables have non-key columns with the same name, Polars appends a suffix to the right table’s version. The default is '_right'; dplyr defaults to .x / .y:

# dplyr: control suffixes with suffix = c("", "_right")

x |> left_join(y, join_by(key), suffix = c("", "_right"))# Polars: suffix= controls what is appended to right-table duplicates (default: '_right')

x.join(y, on='key', how='left', suffix='_right')After joining on left_on='a' and right_on='b', Polars offers explicit control over whether both key columns appear in the output via coalesce=:

coalesce=None (default) — coalesce unless how='full'coalesce=True — always merge the two key columns into onecoalesce=False — keep both key columns separately, appending suffix to the right onedplyr always coalesces the join key into the left column name. The Polars coalesce=False option is useful when you want to inspect both keys after a full outer join to diagnose mismatches:

# coalesce=False: keeps both 'Reporting_Airline' (left) and 'carrier' (right)

(

full_flights

.join(airlines, left_on='Reporting_Airline', right_on='carrier', how='left', coalesce=False)

.select(['Reporting_Airline', 'carrier', 'name', 'DepDelay'])

.head(3)

)| Reporting_Airline | carrier | name | DepDelay |

|---|---|---|---|

| str | str | str | f64 |

| "YX" | "YX" | "Republic Airways" | -3.0 |

| "YX" | "YX" | "Republic Airways" | -10.0 |

| "YX" | "YX" | "Republic Airways" | -6.0 |

Filtering joins keep or discard left-table rows based on whether a match exists in the right table. Unlike mutating joins, they never add columns or duplicate rows — they only filter.

major_airports = pl.DataFrame({

'iata': ['ATL', 'LAX', 'ORD', 'DFW', 'DEN'],

'city': ['Atlanta', 'Los Angeles', 'Chicago', 'Dallas', 'Denver']

})Semi join — keeps left rows that have at least one match in the right table:

# dplyr: flights departing from a major hub

full_flights |> semi_join(major_airports, join_by(Origin == iata))full_flights.join(major_airports, left_on='Origin', right_on='iata', how='semi').shape(105677, 110)Anti join — keeps left rows that have no match in the right table:

# dplyr: flights that did NOT depart from any major hub

full_flights |> anti_join(major_airports, join_by(Origin == iata))full_flights.join(major_airports, left_on='Origin', right_on='iata', how='anti').shape(432225, 110)Neither semi nor anti joins add columns from the right table; the right table acts purely as a filter mask.

A common silent bug: a join expected to be many-to-one fans out unexpectedly because the right table has duplicate keys, inflating the output row count without any error.

dplyr warns by default when it detects an unexpected many-to-many relationship and requires explicit acknowledgement via relationship=:

# dplyr warns when many-to-many is detected

df1 |> inner_join(df2, join_by(key))

#> Warning: Detected an unexpected many-to-many relationship

# acknowledge explicitly

df1 |> inner_join(df2, join_by(key), relationship = "many-to-many")

# assert one-to-one — errors if either table has duplicate keys

df1 |> inner_join(df2, join_by(key), relationship = "one-to-one")Polars exposes this as the validate= parameter with four options:

validate= |

meaning | asserts |

|---|---|---|

'm:m' (default) |

many-to-many | no check |

'1:1' |

one-to-one | keys unique in both tables |

'1:m' |

one-to-many | keys unique in left table |

'm:1' |

many-to-one | keys unique in right table |

Unlike dplyr, Polars defaults to 'm:m' (no check) and raises an InvalidOperationError immediately when validation fails rather than warning. Declaring the expected relationship explicitly is good practice — it documents intent and catches data-quality problems early:

# assert that airlines has one row per carrier code (many-to-one join)

(

full_flights

.join(airlines, left_on='Reporting_Airline', right_on='carrier', how='left', validate='m:1')

.select(['FlightDate', 'Reporting_Airline', 'name', 'DepDelay'])

.head(5)

)| FlightDate | Reporting_Airline | name | DepDelay |

|---|---|---|---|

| str | str | str | f64 |

| "2022-01-14" | "YX" | "Republic Airways" | -3.0 |

| "2022-01-15" | "YX" | "Republic Airways" | -10.0 |

| "2022-01-16" | "YX" | "Republic Airways" | -6.0 |

| "2022-01-17" | "YX" | "Republic Airways" | -7.0 |

| "2022-01-18" | "YX" | "Republic Airways" | -6.0 |

Non-equi joins match rows using conditions other than equality — comparisons such as <, <=, >, or >=. Both dplyr and Polars support them, but with different syntax. In Polars they are separate methods rather than arguments to .join().

A cross join returns the Cartesian product — every left row paired with every right row. It requires no key:

# dplyr

df |> cross_join(df)# Polars: how='cross', no on= argument

carriers = full_flights.select('Reporting_Airline').unique()

origins = full_flights.select('Origin').unique().head(5)

# all carrier–origin combinations

carriers.join(origins, how='cross').shape(85, 2)Cross joins grow quadratically with table size; use only with small tables.

An inequality join matches rows where a value satisfies a relational condition (<, <=, >, >=) against a value in the right table. In dplyr this is expressed inside join_by(); in Polars it uses the dedicated .join_where() method, which accepts one or more Polars expression predicates AND-ed together:

# dplyr: pair employees with projects whose skill range they fall within

employees |>

inner_join(projects, join_by(skill_level >= min_skill, skill_level <= max_skill))employees = pl.DataFrame({

'name': ['Alice', 'Bob', 'Carol'],

'skill_level': [3, 7, 5]

})

projects = pl.DataFrame({

'project': ['Alpha', 'Beta', 'Gamma'],

'min_skill': [1, 5, 2],

'max_skill': [4, 9, 6]

})

employees.join_where(

projects,

pl.col('skill_level') >= pl.col('min_skill'),

pl.col('skill_level') <= pl.col('max_skill')

)| name | skill_level | project | min_skill | max_skill |

|---|---|---|---|---|

| str | i64 | str | i64 | i64 |

| "Bob" | 7 | "Beta" | 5 | 9 |

| "Carol" | 5 | "Beta" | 5 | 9 |

| "Carol" | 5 | "Gamma" | 2 | 6 |

| "Alice" | 3 | "Gamma" | 2 | 6 |

| "Alice" | 3 | "Alpha" | 1 | 4 |

.join_where() always performs an inner join; rows that satisfy none of the predicates are excluded.

An asof join is a left join that matches each left row to the nearest right row whose key is ≤ (or ≥) the left key, without requiring an exact match. This is the standard pattern for joining event data to a slowly-changing reference table — for example, attaching the most recently published fuel price to each daily flight summary.

In dplyr this uses join_by(closest(...)):

# dplyr: attach the most recent fuel price on or before each date

daily_summary |>

left_join(fuel_prices, join_by(closest(FlightDate >= price_date)))Polars provides join_asof(), a dedicated method that requires both tables to be sorted by the join key:

fuel_prices = pl.DataFrame({

'price_date': pl.Series(['2022-01-01', '2022-02-01']).str.to_date(),

'fuel_usd_per_gallon': [2.85, 2.97]

})

daily_summary = (

flights_ts

.group_by('FlightDate')

.agg(n_flights=pl.len(), mean_delay=pl.col('DepDelay').mean())

.sort('FlightDate')

)

daily_summary.join_asof(

fuel_prices,

left_on='FlightDate',

right_on='price_date',

strategy='backward'

)| FlightDate | n_flights | mean_delay | price_date | fuel_usd_per_gallon |

|---|---|---|---|---|

| date | u32 | f64 | date | f64 |

| 2022-01-01 | 15852 | 28.465361 | 2022-01-01 | 2.85 |

| 2022-01-02 | 19058 | 37.196565 | 2022-01-01 | 2.85 |

| 2022-01-03 | 19216 | 32.492788 | 2022-01-01 | 2.85 |

| 2022-01-04 | 16965 | 24.988289 | 2022-01-01 | 2.85 |

| 2022-01-05 | 16863 | 18.89749 | 2022-01-01 | 2.85 |

| … | … | … | … | … |

| 2022-01-27 | 18547 | 5.474907 | 2022-01-01 | 2.85 |

| 2022-01-28 | 18506 | 14.655222 | 2022-01-01 | 2.85 |

| 2022-01-29 | 14946 | 6.04183 | 2022-01-01 | 2.85 |

| 2022-01-30 | 18087 | 9.327244 | 2022-01-01 | 2.85 |

| 2022-01-31 | 18051 | 6.364568 | 2022-01-01 | 2.85 |

The strategy= parameter controls the matching direction:

strategy= |

matches the right row whose key is… |

|---|---|

'backward' (default) |

≤ the left key (most recent prior record) |

'forward' |

≥ the left key (next upcoming record) |

'nearest' |

closest to the left key (either direction) |

An optional tolerance= argument limits the matching window: tolerance='30d' returns null instead of a match if the nearest right key is more than 30 days away from the left key.